ETL Data Integration: What to Look for

Extract, Transform, Load (ETL) has many practical uses, chief among them are its capabilities for data integration. Although data integration is one of the most common ways businesses choose to use ETL, ETL also has uses in loading data warehouses, augmenting data for profiling, and data migration. So, why do ETL procedures have a hand in so many types of data processes? Why is ETL beneficial for integration? What should a business be looking for in an ETL data integration tool?

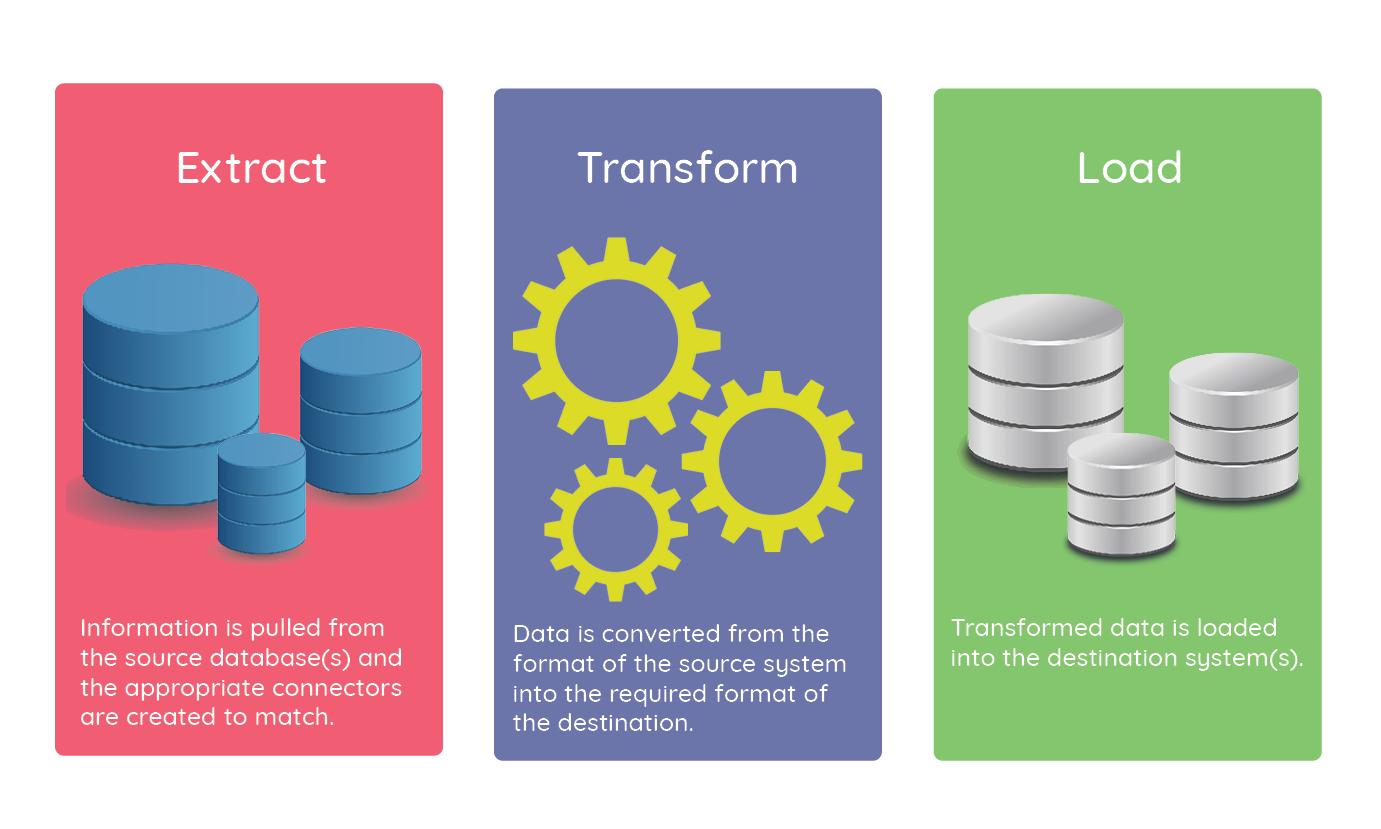

ETL is a process with three specific steps. Step one is to extract data from one or more sources. Step two is to transform that data into a form that is accessible to the target solution, and step three is loading the transformed data. In the midst of the ETL process, developers are carefully monitoring errors to keep things running smoothly. Let’s get into a bit more detail on each aspect of ETL.

Extract: Many organizations collect and store data across numerous platforms and databases. Data integration is used to gather all the information and make it accessible across platforms, thereby creating greater transparency and alignment between teams. The initial step of ETL data integration is to extract data. Before that happens though, the organization doing the data integration must define which pieces of information are significant to their processes. Once the integrating data is established, the ETL tool can be used to create connections that properly sync the information between systems.

If you read through StarfishETL’s website, you’ll see many references to “connectors”. Some solutions offer Native integrations, meaning, they are using the integration Web services / API provided by the manufacturer. Since most manufacturers have their own connector format, it becomes challenging to scale and manage change to software, and to support new softer. StarfishETL takes a different approach. We have a proprietary standard connector format which allows us the build against one format and support and scale to multiple applications with limited changes the program and any maps that were previously built.

In addition to connectors, the extract phase also requires some limited mapping to determine a data retrieval strategy. The data map tells the integration which data matches with which fields. For example, if you’re source system uses the term “Accounts” but your destination system labels accounts as “Companies”, the map must specify the connection between those two fields.

Transform: The extraction of data and creation of connectors establishes the baseline of the ETL process. The next step is to convert that data into a consistent format that meets the structural requirements of the target destination. During the transformation stage, the data must first be cleaned to ensure its integrity. The rest of the process involves setting specific business rules that constrain the data to the correct format.

Load: This is the final step in the ETL process. The load stage is when the transformed data is loaded into its destination system. There are two methods of loading data: doing a full load and doing an incremental load. A full data load is pretty much what you would imagine. The first time data is loaded into the integrating solution, it’s pushed all at once. Because a full load is processing so much data, techniques like concurrent workflow execution and bulk data loading may be used to try and streamline the process.

Incremental loading then synchronizes new or updated data as it is added, allowing the integration to process only the most recent transactions instead of synchronizing all the data every time a new piece of information is logged. This saves time and computing power.

Integrations can be complex beasts. An ETL tool makes managing that complexity easier by creating an organized structure, automatically identifying the correct formats of the data, and setting business rules for how the data will interact. Other key advantages of ETL data integration are:

There are several ETL data integration solutions to choose from out there, so how do you which ones are equipped with the ideal features for your integration? Here are some features to look for in a high-performing ETL:

For help getting started with your next ETL data integration, contact our team. We’ll set up a free consultation to discuss your needs.

Fill out the form below and we will contact you